Good code is easy now.Good engineers aren’t.

See who's engineering and who's vibecoding. Candidates build on your real codebase with AI, and you see every decision they make.

Free pilot — no credit card required

The Platform

See exactly how every candidate builds.

01 — Create Assessment

Connect your repo. Define your rubric. Send a link.

Link your GitHub repo or choose from pre-built templates. Then define custom evaluation criteria with 10+ AI review agents. Invite candidates with a single link.

02 — Candidate Experience

Candidates build on your actual codebase

Candidates get a browser-based IDE with Claude Code on your actual repo — no local setup required. They build, debug, and refactor real code, exactly how they'd work on the job.

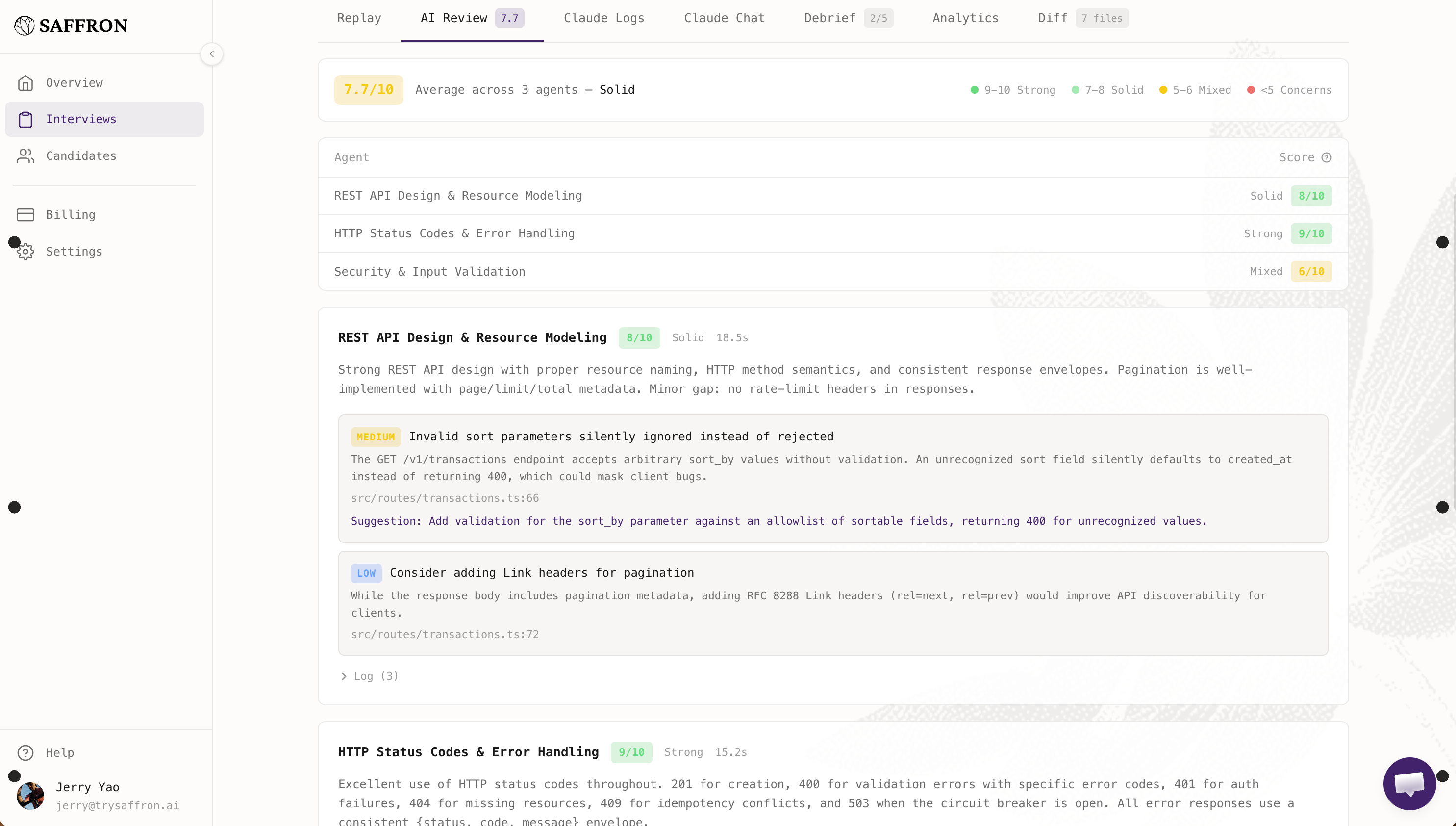

03 — Review Results

Know exactly how they built it

Multiple AI agents score every submission against your criteria. See which code was human-written vs AI-generated, replay the full session, and verify understanding through AI-checked debrief questions.

How It Works

One assessment replaces your entire interview loop.

Your current process

With Saffron

0+ agents

Score every submission independently against your criteria.

Every line

Classified as human-written, AI-generated, or AI-modified.

Full replay

Every keystroke, every prompt, every decision — reviewable.

Compare

Side by side, it's not close.

What interviews tell you

“They solved the algorithm.”

What Saffron shows you

They architected a real feature on your codebase, iterated 3 times, used AI for boilerplate while writing core logic by hand.

What interviews tell you

“We don’t know how they used AI because we banned it.”

What Saffron shows you

Every prompt, every AI suggestion accepted or rejected, every iteration — captured and scored.

What interviews tell you

“The panel gave mixed feedback.”

What Saffron shows you

10+ independent agents scored against your criteria, with evidence cited for every rating.

Competitors

How Saffron compares.

Every platform has its own valuable features. Here's why Saffron wins.

What candidates build

AI tools

How work is scored

Session visibility

Code attribution

Interviewer time

See how your next hire actually builds.

See every line of code, every AI interaction, every decision — before you make an offer.

No credit card required. Free pilot available.